242-2009: More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

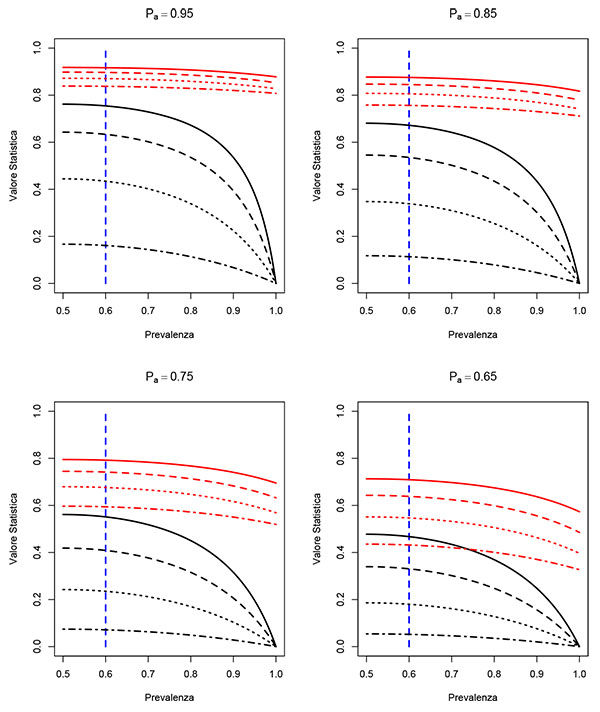

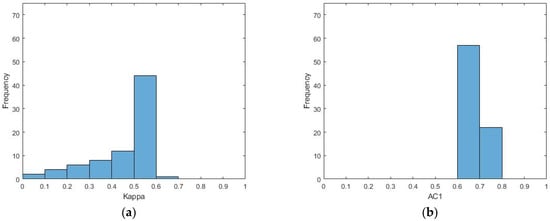

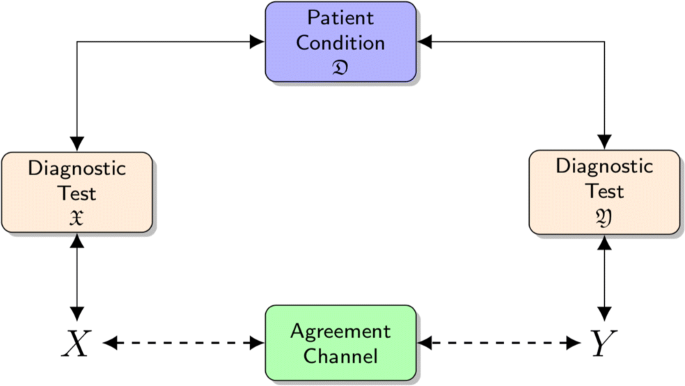

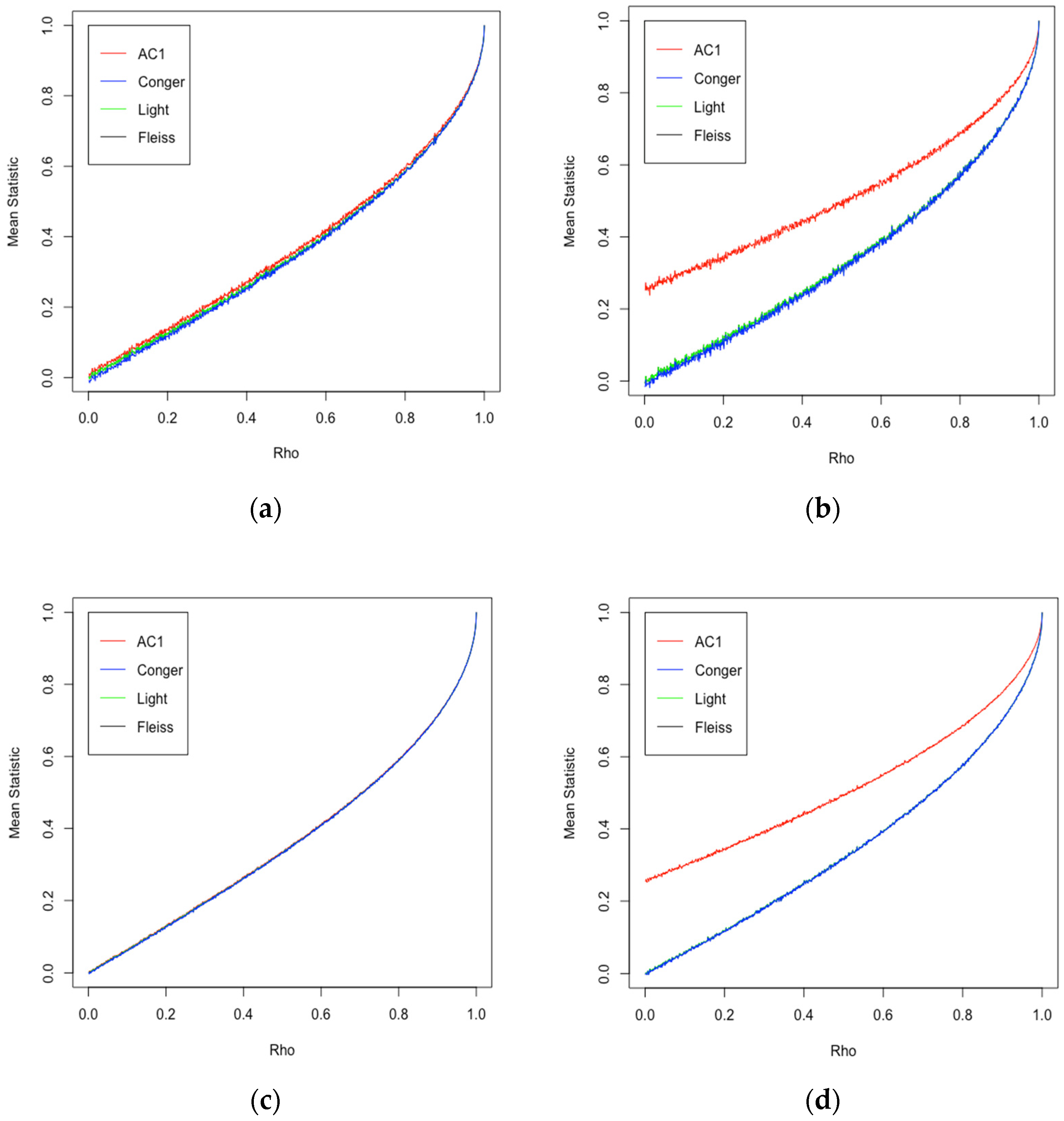

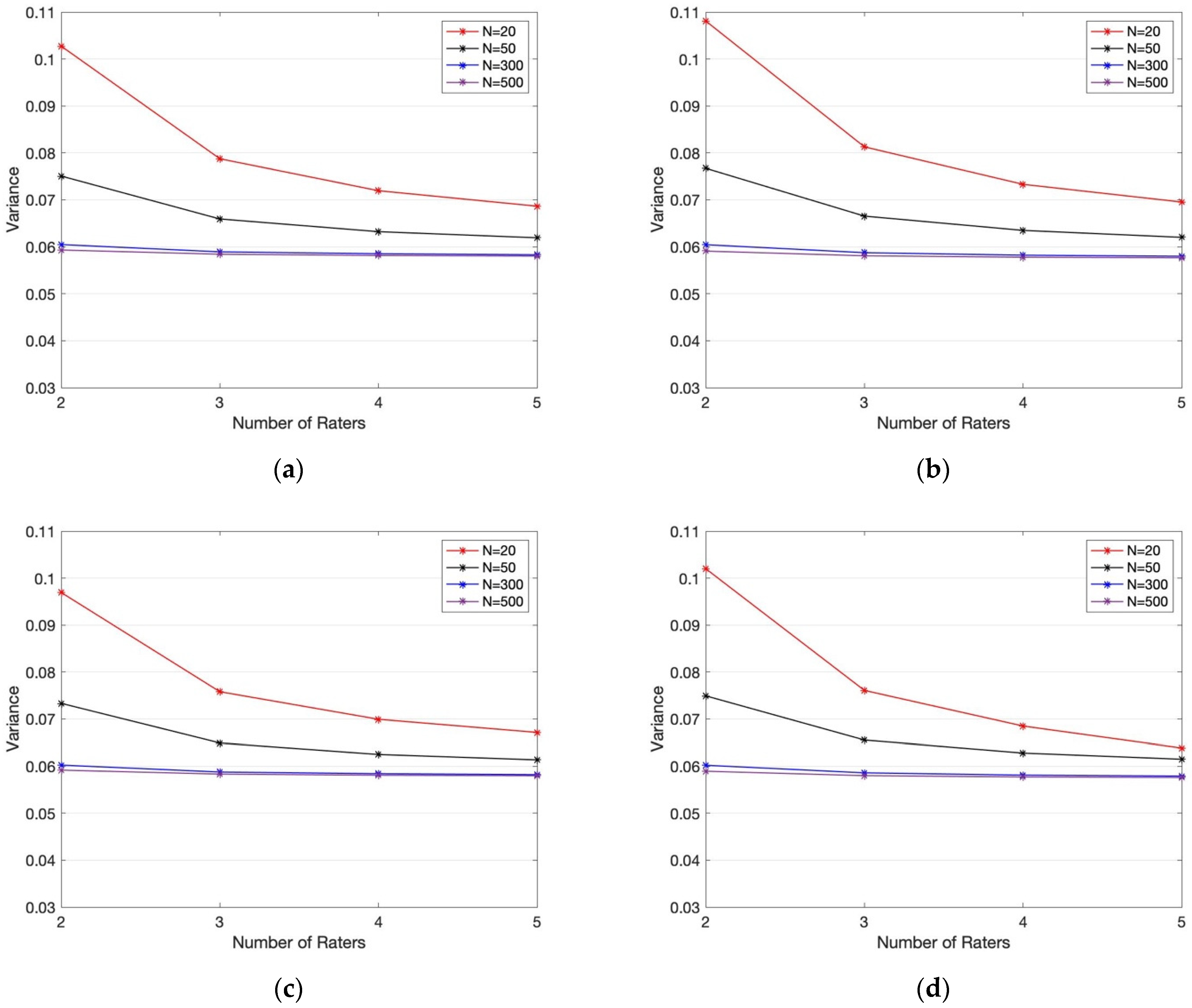

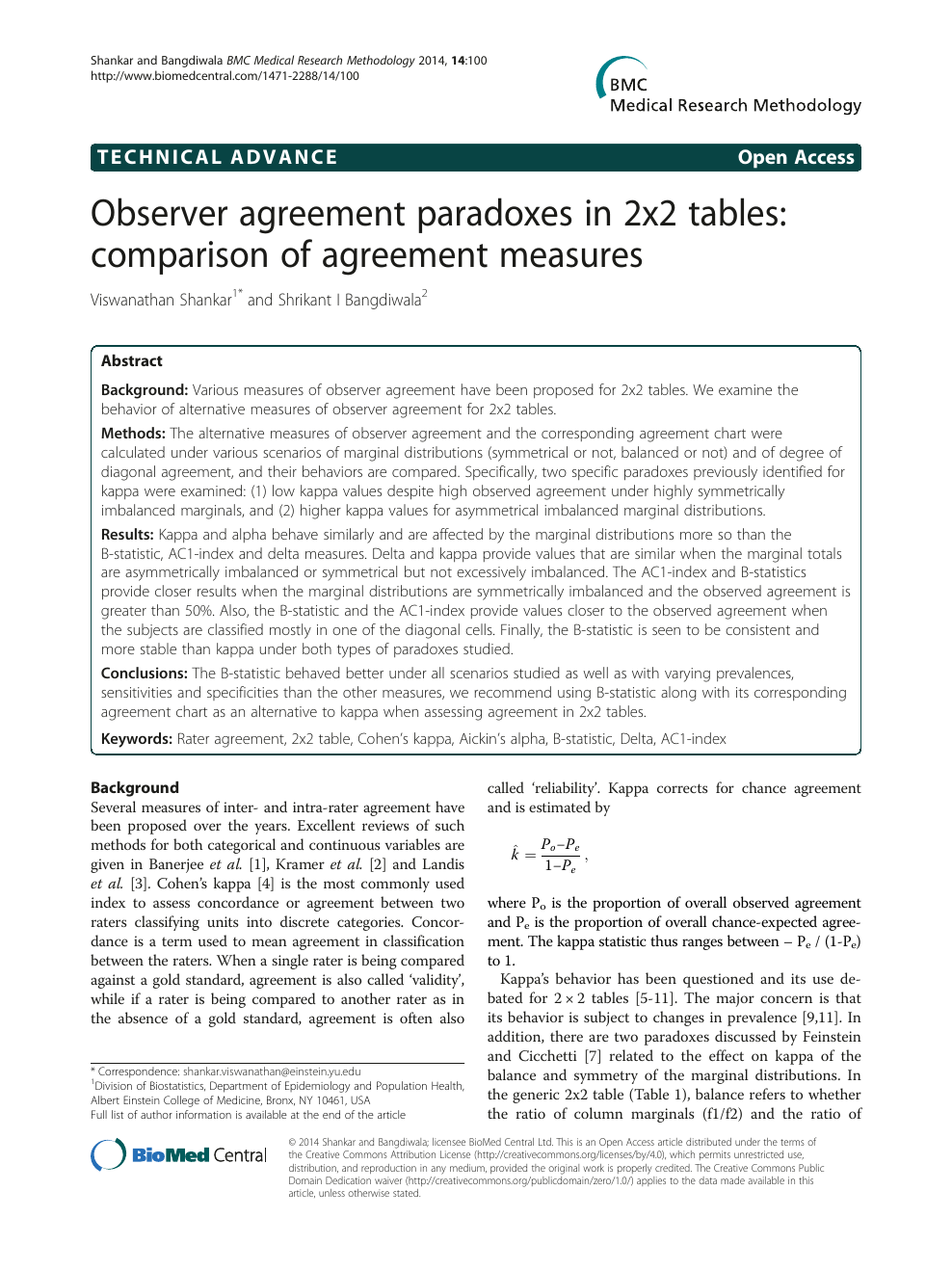

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters | HTML

Clorthax's Paradox Party Badge + Summer Sale Trading Cards & Badge | Steam 3000 Summer Sale Tutorial - YouTube

Beyond kappa: an informational index for diagnostic agreement in dichotomous and multivalue ordered-categorical ratings | SpringerLink

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters | HTML

Kappa Delta Pi Co-Publications: Creativity and Education in China : Paradox and Possibilities for an Era of Accountability (Paperback) - Walmart.com

Kappa Statistic is not Satisfactory for Assessing the Extent of Agreement Between Raters | Semantic Scholar

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters | HTML

![PDF] More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters | Semantic Scholar PDF] More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/79de97d630ca1ed5b1b529d107b8bb005b2a066b/1-Figure1-1.png)

PDF] More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters | Semantic Scholar

Systematic literature reviews in software engineering—enhancement of the study selection process using Cohen's Kappa statistic - ScienceDirect

Observer agreement paradoxes in 2x2 tables: comparison of agreement measures – topic of research paper in Veterinary science. Download scholarly article PDF and read for free on CyberLeninka open science hub.

![PDF] High Agreement and High Prevalence: The Paradox of Cohen's Kappa | Semantic Scholar PDF] High Agreement and High Prevalence: The Paradox of Cohen's Kappa | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/c348c2b3ab5510a4c0576e93747355a1d63a7347/2-Table1-1.png)